This post originally appeared on my blog Biotech, Baseball, Big Data, Business, Biology…

The world would be a simpler place, although maybe a much more boring and predictable one, if every aspect of performance could be measured directly. My completely unoriginal thought here is that one of the reasons sports appeal to so many people is because they provide clarity. In a confusing, complex world where the NSA is sucking up our information like a Dyson vacuum sucks feathers in a henhouse, and we’re told this is for our own good, clarity can be refreshing.

The simple view of an athlete’s performance is that all the accolades (or jeers), all the milestones (or flops), all the accumulated statistical totals (or lack thereof) are because of that athlete’s ability: his or her drive, passion, training, and natural ability. And that performance is measured via the statistics each sport collects and chooses to honor and promote. Performance is right there, what more do you need? What more could you want?

But much as we might find the simple view intuitive and appealing, it’s also incorrect. Not only are some of those statistics, at best, clearly crude proxies for true ability, they are also often (always?) dependent upon context. Where and to whom did that quarterback throw all those touchdown passes? Which coach directed that basketball player during the prime of her career to play in a style that complimented (or confounded) her natural tendencies and strengths? What elements of that slugger’s personal life were in shambles the year he broke into the major leagues and thrived/struggled, and what difference did it make?*

If sports analysis is moving in any direction, I like to think it’s moving towards a nuanced, humble view of sports performance that accepts the statistics, the measured performance, the team won-loss record, as proxies at best, distant cousins twice-removed from what we are most curious about: who’s good? How good? Was/were he/she/they ever the best? What does this record mean in absolute terms, if such a Platonic thing could ever exist? We might try to find better ways to measure performance and context, but we’ll always be approaching the asymptote, never quite getting there.

And if athletic performance is so hard to measure, how much harder is it to measure those whose actions are another step yet away from the statistics, the solid measurements produced on the field?

What makes a good manager or coach, and how can we tell?

This is a topic of endless debate, and for good reason. Although they are not often paid like it, there is among many a feeling that the manager or head coach is one of the key elements underlying athletic and team success. As Bum Phillips said of Bear Bryant, “He could take his’n and beat your’n, or take your’n and beat his’n.” This is maybe the ultimate expression of the belief that a coach is what makes the team what it is.

Whether that’s true or not is the question, though. It’s clearly not simple. There have been efforts in the sports analysis community to try and figure out how much coaches and managers matter although sometimes these efforts suffer a little too much from retrospective analysis. For example, “these managers’ teams had winning won-loss records, so therefore they are better managers. Let’s look at the traits they have in common and say those are the traits that let us classify managers into good and bad.” These kinds of analyses are, I believe, over-fitting the data, and it’s often not long before contrary examples pop up.

So what to do? Well, a working paper put out by the National Bureau of Economic Research showed one possible way (thanks to @freakonomics and @marketplaceAPM)

The methodology may not be completely applicable to the sporting environment, or even to most business environments but sports I think is a closer match than most because of the nature of player and coach movements (as we’ll see in a bit).

This study, by researchers at Stanford and the University of Utah, attempted to answer the question of how much bosses are worth to employee performance. And the method they used, frankly, was based on brute force. They first had to find a business situation that would offer them a huge sample size (23,878 workers, 1940 bosses, and 5,729,508 worker-day measurements of productivity) and a clearly quantifiable and electronically captured measure of productivity: technology-based services (TBS). Think of jobs like retail clerks or call center operators where specific actions are repeated and logged; the specific business that was studied remains nameless as a condition of the research. And the third characteristic that made this work is that this particular company also moves employees from boss to boss on a regular basis — in general once or twice a year.

Let me digress for just a second to expand on why this is so important. In clinical research the gold standard is the double-blinded, placebo controlled trial. Which this was not. But it’s a good deal more rigorous than an anecdotal, under-powered observational study. Essentially, their study design is a retrospective, (effectively) randomized crossover study. This allows the performance of each individual to be compared both within the period of time he or she is working for a given boss as well as across different bosses. The accumulation of so many data points allowed the researchers to build statistical models that could isolate the effect of specific bosses on performance even given the vast amounts of noise that are inherent in the day-to-day performance of these employees.

In addition, their model is designed to discovery, a priori, which bosses are best rather than relying on any information from the company under study. In other words, factors such as won-loss records and championships and media savvy don’t enter into the equation. Whether the company or the researchers are going back to their data and corroborating it now with surveys and opinions of the employee, bosses and upper management I don’t know, but that would be fascinating, wouldn’t it?

To give a very high level of summary of their work, they created a mixed model of human capital as the product of talent and effort. Each of those two elements was then further broken down into components that are and are not under the influence of one’s boss. Next, estimation methods were used to approximate the relative effect of different components within the model, including those due to the boss, based on the shape of the overall dataset.**

They uncovered several possible effects in their analysis, the primary one being that top bosses can result in about a 10% increase in the productivity of his or her group relative to the worst bosses. They also found that a good boss seemed to affect worker retention, and that there was a small but significant effect of pairing good workers with good bosses.

Generalizing the specific findings directly in any way to sports management is completely unwarranted. There are several key differences between the situation they analyzed and the team environment; these should not be overlooked, such as: the diversity of actions taken by any individual athlete in a team setting (as opposed to rote, repetitive work like taking reservations in a call center); the effect of peers in a sports environment likely being greater than the work situation described in this study (i.e., workers being generally autonomous in their tasks), and that athletes often get different bosses by moving between establishments (teams) whereas the current study examined a single company.

However, what I think is worth exploring is the question of whether a similar methodology could be applied to sports teams. Let me just say that I will not attempt an exploration myself, I’m just pointing out the possibilities. So anyone hoping for a big take-home message can stop now. Sorry for taking five minutes of your life!

Here are what I see as the requirements of a sport that would allow generation of a large and diverse enough dataset.

1) Specific measurements of output. As discussed above in the way-too-long-winded introduction, one thing sports has plenty of are measurements of output. Except soccer. What do people measure in soccer? YouTube video highlights of great runs followed by missed kicks?***

2) A large number of transitions of coaches/managers and players between situations. Fortunately in this age of free agency, trades and hot seats, there are routinely numerous changes of players and coaches/managers every season. Also fortunately, players and coaches/managers often get multiple chances with different teams and situations.

3) Enough data. This is a tough one. Off the top of my head it seems baseball and basketball are really the only sports that have enough granularity, a long enough season, and sufficient numbers of teams and players to make this work. Maybe hockey. American football, probably not.

However, it seems worth a try. To explore this idea further with baseball as an example, one could choose to isolate one component of performance such as a hitting statistic. Since we would want to measure something that is both generally agreed upon as positive and also something that stabilizes relatively quickly to best reflect effect of coaching, one could pick strikeout rate (60 plate appearances (PA)), walk rate (120 PA), or singles rate (290 PA). It should be stated up front that this means the effect of the manager only on that particular skill will be seen. Probably the entire analysis would need to be repeated for each of several offensive statistics to create a composite and granular picture of how a given manager influences players under his direction.

One could then use individual game performance as the time component of the model and collect data on that specific metric over time and relate that to which managers a given player had and for how long. The null hypothesis would be that the effect of managers would be nothing, and so the result of the model we would look for are signs that specific managers do make a substantial difference in performance by the players under his instruction compared to those same players before and after being on that manager’s team.

Is there enough data for a signal to be seen? You know, in my day job as a genomics researcher this is probably the main question I get from scientists wanting to perform an experiment: is the number of experimental subjects big enough? And my answer is always the unsatisfying, “We won’t know for sure until we do the experiment and compare the natural variation to the effect size.” Same answer here.

And why bother? Well, I go back to what I said earlier about approaching the asymptote and trying to learn more. Not just in sports, but in so many other parts of life, there are elements that right now are in the realm of intuition and anecdote and subjectivity. Who’s a good CEO? What public policy interventions do good and, more important perhaps, are the most cost effective? Wired magazine just had a nice article about the use of controlled trials to measure the actual effect of public policy interventions in the developing world. In our search to make the world a better and more understandable place, we owe it to ourselves to keep asking questions and trying to come up with ways to answer them.

*Notice here, by the way, that you can take either situation–thriving or failing–and make up a completely believable story in your head about how that player’s personal life played a role in his performance. How he rose above the conflict, or the field was his refuge, or his anger or frustration fed his on-field performance. Alternatively, how he’s a tragic figure, his potential derailed by drugs/philandering/emotions, making him an all too human and very sympathetic figure. This is because our minds are programmed to make up stories, to find cause and effect, to indulge in the narrative fallacy. Be careful of that. It will screw up your thinking faster than anything else.

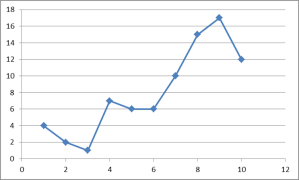

**Just for fun, here’s one of the equations from their model.

This roughly translates as: An individual worker (i)’s output (q) at time t is equal to the ability of the mean worker (alpha sub zero) plus the specific worker’s innate ability (alpha sub i) plus the set of variables outside the worker-boss interaction (X sub it times capital Beta) plus the ability of an average boss (d sub 0t) divided by team size (N sub jt) to the theta power, where theta is related to public versus private time with the boss plus the ability of the current boss (d sub jt) divided by the team size to theta. This equation relates to the current effect. A longer version of the equation tries to take into account the effect of past bosses and the persistence of boss effects.

***I am reminded of one of the fine haikus inspired by the 1994 World Cup tournament in the US, source sadly lost to me although if anyone remembers it, let me know:

“Run, run, run, run, run

Run, run, run, run, run, run, run

Run, run, pass, shoot, miss.”